The procedure has been developed to help engineers build a safety case that explicitly and systematically establishes confidence in the ML long before ending up in the hands of everyday users.

As more robots, delivery drones, smart factories and driverless cars appear, current safety regulations for autonomous technologies present a grey area. Global guidelines for autonomous systems are not as stringent compared to other high-risk technologies and current standards often lack detail, meaning that new technologies that use AI and ML are potentially unsafe when they go to market.

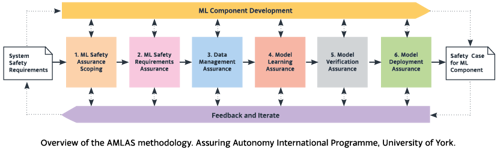

Developed by the Assuring Autonomy International Programme (AAIP) at the University of York, this new guidance is called the Assurance of Machine Learning for use in Autonomous Systems (AMLAS). The AAIP worked with industry experts to develop the process, which systematically integrates safety assurance into the development of ML components.

Dr Richard Hawkins, Senior Research Fellow and one of the authors of AMLAS, said: "The current approach to assuring safety in autonomous technologies is haphazard, with very little guidance or set standards in place. Sectors everywhere struggle to develop new guidelines fast enough to ensure that robotics and autonomous systems are safe for people to use.

"If the rush to market is the most important consideration when developing a new product, it will only be a matter of time before an unsafe piece of technology causes a serious accident."

The AMLAS methodology has already been used in several applications, including transport and healthcare. In one of its healthcare projects, AAIP is working with NHS Digital, the British Standards Institution, and Human Factors Everywhere to use AMLAS to help create resources that support manufacturers to meet the regulatory requirements for their ML healthcare tools.

Dr Ibrahim Habli, Reader at the University of York and another of the authors, said: “Although there are many standards related to digital health technology, there is no published standard addressing specific safety assurance considerations. There is little published literature supporting the adequate assurance of AI-enabled healthcare products.

“AMLAS bridges a gap between existing healthcare regulations, which predate AI and ML, and the proliferation of these new technologies in the domain.”

An independent, neutral broker, AAIP connects businesses, academic research, regulators, and the insurance and legal professions to write new guidelines for safe AI, robotics, and autonomous systems.

“AMLAS can help any business or individual with a new autonomous product to systematically integrate safety assurance into the development of the ML components. We show how you can provide a persuasive argument about your ML model to feed into your system safety case. Our research helps us understand the risks and limits to which autonomous technologies can be shown to perform safely,” said Dr Hawkins.

“At York, we have a vast body of research into the best practices and processes for gathering evidence that can appraise the safety of these new complex technologies. We train people in the safe design, assessment and use of robotics and autonomous systems.

“Without a compelling argument about your ML model to feed into a system safety case, it is hard to assure the safety of your system. Developers can use our provided patterns, follow the process and instantiate their safety argument.”

The AAIP is a safety assurance group at the University of York and works in partnership with Lloyd’s Register Foundation, the charitable arm of Lloyd’s Register, which is dedicated to engineering a safer world.