Usually this type of capability would be deployed either through a powerful (and costly) applications processor or a microcontroller with additional components to accelerate key capabilities. The xcore.ai crossover processor from XMOS, however, has been architected to deliver real-time inferencing and decisioning at the edge, as well as signal processing, control and communications, enabling electronics manufacturers to integrate high-performance processing and intelligence economically into their products.

Smart devices typically require energy-hungry and costly connectivity to the cloud. This, according to XMOS, comes marred with challenges around latency, connectivity, privacy and energy consumption. By providing efficient, high-performance compute at the edge, the xcore.ai looks to deliver solutions to each of these challenges while at the same time keeping cost low.

xcore.ai is being described as a new generation of embedded platform. It’s a versatile, scalable, cost-effective and easy-to-use processor and with its fast processing and neural network capabilities, xcore.ai has been designed to enable data to be processed locally and actions taken on device - within nanoseconds.

XMOS CEO Mark Lippett said: “xcore.ai delivers the world’s highest processing power for a dollar. This, coupled with its flexibility means electronics manufacturers (no matter their size) can embed multi-modal processing in smart devices to make life simpler, safer and more satisfying for all.”

Product demos will be available from June 2020.

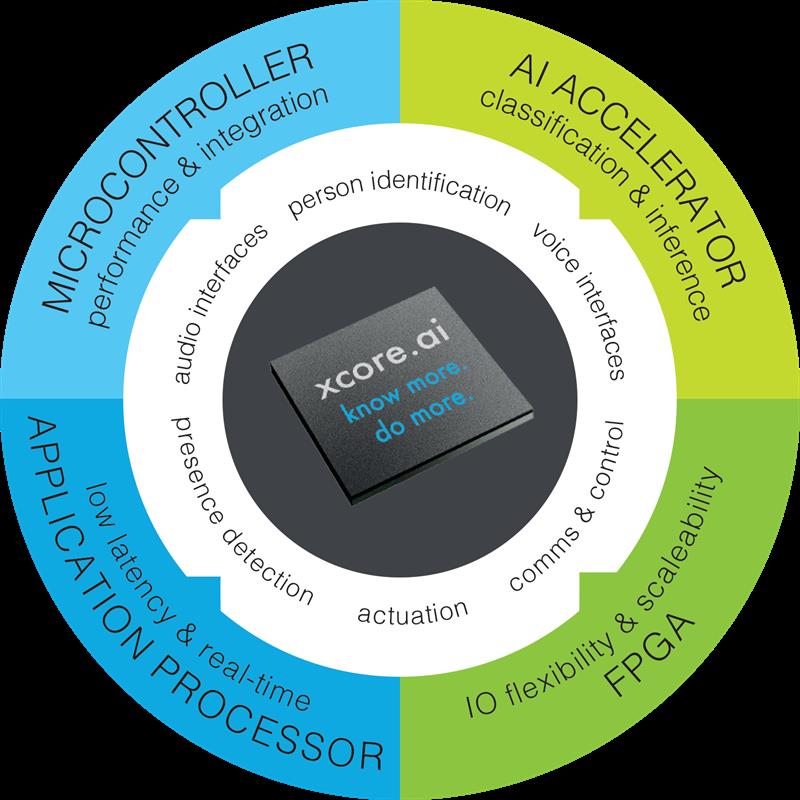

A scalable, multi-core, crossover processor the xcore.ai has been designed to be used on the edge, and it can interpret data without communication with the cloud. It has been designed to deliver the performance of an Applications Processor but with the ease-of-use of a microcontroller, enabling embedded software engineers to deploy every different class of processing workload on a single multicore crossover processor.

Programmability

- Fully programmable in ‘C’, with specific features such as DSP and machine learning accessible through optimised c-libraries.

- Supports the FreeRTOS real-time operating system, enabling developers to use a broad range of familiar open-source library components.

- TensorFlow Lite to xcore.ai converter, allows easy prototyping and deployment of neural network models.

Connectivity

- Up to128 pins of flexible IO (programmable in software) give access to a wide variety of interfaces and peripherals, which can be tailored to the precise needs of the application.

- Integrated hardware USB 2.0 PHY and MIPI interface for collection and processing of data from a wide range of sensors.

Binarized Neural Networks

- Employs deep neural networks using binary values for activations and weights instead of full precision values, dramatically reducing execution time.

- By using Binary Neural Networks, xcore.ai delivers 2.6x to 4x more efficiency than its 8-bit counterpart.

Product highlights:

· xcore.ai incorporates DSP and machine learning capability together with scalar, floating and fixed point and vector instructions to deliver efficient control

· 16 real-time logical cores, with support for scalar/float/vector instructions, enabling flexibility and scale depending on application

· Flexible IO ports with nanosecond latency, ensuring time-critical response across applications

· Support for 8-bit and binarized neural network inferences (and 16-bit/32-bit), delivering on-device intelligence

· Complex multi-modal data capture and processing, enabling concurrent, on-device application across classification, audio interfaces, presence detection, voice interfaces, comms and control, actuation

· High-performance instruction set for digital signal processing, machine learning and cryptographic functions

· On-device inference of TensorFlow Lite for Microcontroller models, offering a familiar development environment