Embedded system designers face an increasing challenge as they create products that must function in an ever-more-connected, and potentially more hostile, context.

The idea of security as “adequate”, i.e. it would require more effort to compromise a system than the gains for an attacker is now more problematic with increasing connectivity. Access to an embedded system, whose purpose might be to control something relatively mundane can be priceless to those with malicious intent, as vulnerable gateways that can provide access to wider infrastructure.

The problem of security has become multi-dimensional. There is the matter of IP security and how to prevent code being copied and the design cloned. Then, the intended functionality of the systems has to be protected; the design should be secure against being subverted and made to operate in an unintended manner. Of course, the data that the system is primarily intended to handle should be made secure against being copied, as should the details of users.

An attacker should not be able to use the embedded system as an entry-point to gain access to a wider IT infrastructure.

Cryptojacking

To all of these, there has recently been added a new threat; the possibility of stealing compute cycles from a system (embedded, or otherwise). This is the phenomenon of “cryptojacking”, wherein a script is added to the normal operation of the target system, and in the background runs crypto-currency “mining” algorithms, quietly reporting its results via any open port it can access.

The effects may be quite insidious; no data is stolen, no privacy compromised, no malicious actions initiated: the only effect is that CPU cycles “go missing”.

For an embedded system that must exhibit real-time or near-real-time response, that could manifest as a lack of performance at a critical moment. Anecdotally, this has mainly been seen implemented by way of hidden code on web pages. It is, though, not difficult to imagine an embedded system based around a Linux distribution, inadvertently left with an unprotected internet connection that could be exploited. In a similar vein, systems with internet connectivity are open to being taken over as “bots”, becoming engines of a third party’s attacks.

For embedded systems designers security is a problem, but what is less clear is what they should do about it.

The software content of an embedded design often contains code drawn from a variety of sources. It can be specifically for the project but could be being re-used from earlier exercises. There may be segments derived from the suppliers of a system’s hardware – IP such as peripheral drivers for on-chip functions: there may also be open-source blocks of code imported into a design. So, at the very least, all of the sections of an application’s software should be available in source form.

The desktop environment has been a battleground for many years, and in that space an ecosystem has evolved to counter threats to security.

In the embedded space, no such support structures exist. Indeed, as the code in most embedded system designs goes into the field in the form of a single binary executable, the notion of a patch hardly applies.

A complete (version) update is the only option, which in most cases will require user/operator intervention, typically by way of loading a new flash configuration.

The mechanism for so doing, also becomes an aspect of the design that must be secured. That is to say, there should be (at least) a robust login barrier giving access to an admin user level, before the system will accept an authenticated update.

One vendor that has emphasised this aspect is STMicroelectronics, which has indicated it is working on a package for Secure Firmware Upgrade, to be deployed across its portfolio with a range of encoding and authentication schemes.

Prescriptions for actions to be taken to ensure an adequately secure product are many but a common theme is start with the basics.

Thinking of all the ways a project might be vulnerable may not come naturally to the designer, many of whom are already multi-tasking between hardware and software aspects of the product. Setting aside budget for an outside specialist consultant may yield useful input, and is probably best done at the architecting stage, rather than as a retrospective audit.

With respect to software, adherence to accepted standards should go a long way to imparting resistance to well-known methods of attack.

Specialist software tool vendors offer tools using techniques such as static analysis that are intended to be applied as software is written and developed, to “bake in” the requirements of standards such as MISRA, JSF, or HIC++. Code that is compliant to such standards should be resistant to targeted attacks such as forcing stack overflows. The same tool sources caution, however, that while such compliance helps towards ensuring security, it is not enough by itself; it is necessary, but not sufficient.

Arm cores

Given the market share of Arm-core-based microcontrollers in the embedded space, there is a high probability that the silicon in a project will employ an Arm core.

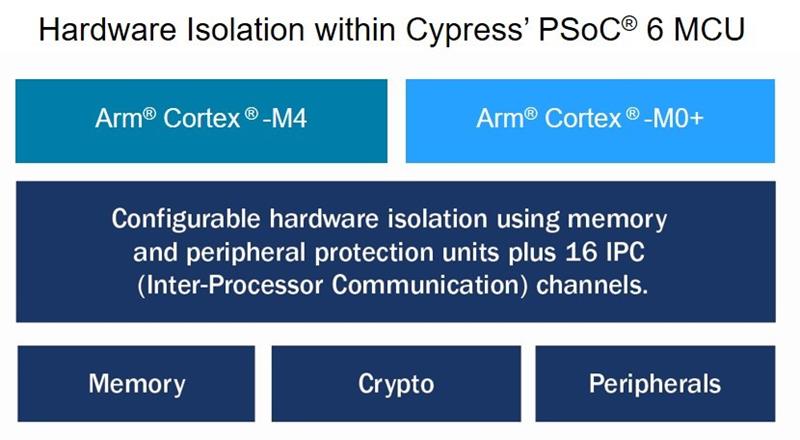

Arm has extended its TrustZone technology to cover Cortex-M MCUs, in addition to the Cortex-A series of cores.

TrustZone creates a trusted execution environment, from trusted boot-up onwards and embodies the concept of parallel, trusted and non-trusted, contexts within a single system.

Secure and non-secure domains are hardware separated, with non-secure software blocked from accessing secure resources directly.

Arm says, “TrustZone for Cortex-M is used to protect firmware, peripheral and I/O, as well as provide isolation for secure boot, trusted update and root of trust implementations while providing the deterministic real-time response expected for embedded solutions.”

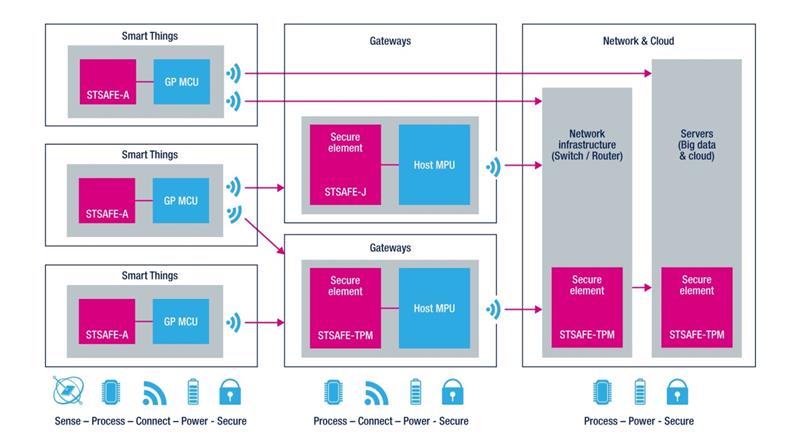

Microcontroller silicon for the embedded sector typically includes functionality to simplify implementing security features, such as hardware optimised to run encryption algorithms. Of equal importance, the MCU makers typically provide the software IP needed to stitch that functionality into the fabric of an application. Focus on end-to-end security in IoT means security at the chip level, over the air, at the server and up to the cloud. Security in a design (that includes wireless connectivity) must be continuous across verification of identity and protection of keys; the integrity of data; and the code that constitutes the design.

It is also possible to concentrate on the subtle at the expense of the mundane; many exploits [hacks and attacks] are very simple. Access is granted or achieved because people simply forget to enable security – or using a default password. “Trivial things matter in security,” as one observer has put it.

If your product has a password-protected admin or maintenance mode, at the very least, ensure that every unit shipped is pre-set with a unique and robust password. You appreciate that while your design may “only” control the lighting, or monitor HVAC parameters, it will also have access to the client’s corporate IT system.

The technician who commissions it might overlook that fact, and also the fact that leaving the installation with “pa55word” enabled is less than ideal.

In many ways, this is not a comfortable place for the engineer to be. There is no single, easy way of assessing security and identifying “bulletproof” corrective actions. Mechanisms for updates are fragmentary, yet designers are creating systems that are being deployed now and that will be in service for a decade or more.

Without being overly alarmist, there may be legal minds poised to explore a new area of product liability. In the emerging connected world, how much attention to security might be considered “necessary”, “adequate”, or “sufficient”?

Help is at hand, however, and the chipmakers realise that they must not only provide the cryptography and other hardware elements of secure solutions, but also need to aid their customers in assembling the complete package.

Author details: Cliff Ortmeyer is Global Head of Technical Marketing, Farnell