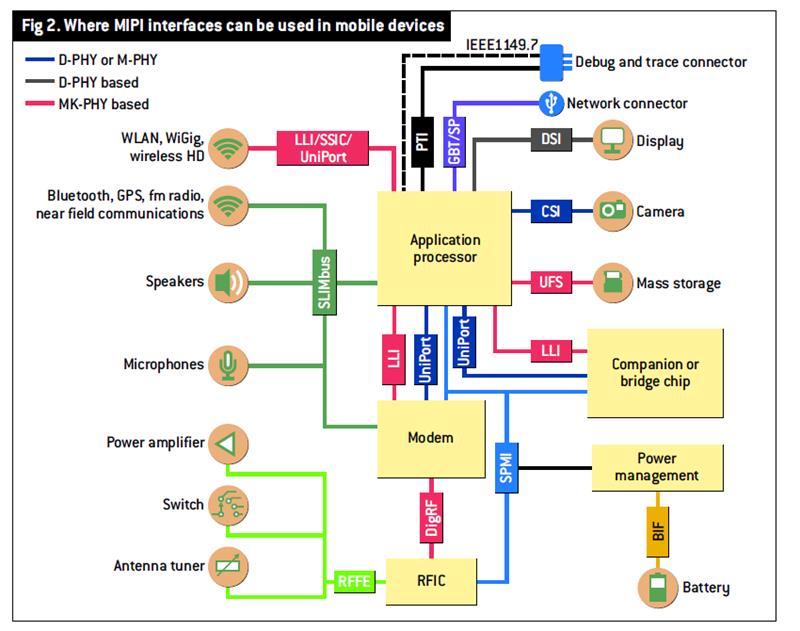

The Alliance's task was to standardise the chip interfaces used within handsets and, in particular, to develop interfaces for the handset's display and camera using a low power, low noise physical layer (PHY) device. But the Alliance's scope has grown to include chip to chip links, not just interfaces for the handset's application processor.

"Given how the mobile handset was evolving, we realised we could not continue to define the roadmap for the mobile terminal if we only defined the interfaces between the application processor and peripherals," said Joel Huloux, chairman of the MIPI Alliance.

Now, MIPI interfaces are being adopted in consumer devices such as tablets and digital cameras, and in other industries such as automotive and health care.

"One activity, which is likely to go cross industry, is the whole business of sensors," said Rick Wietfeldt, technical steering group chair at the MIPI Alliance. "By sensors, we mean a lot of things: gyros and accelerometers – things that are in devices today – but also others, like temperature and even medical sensors."

MIPI has been working with the sensor industry for more than six months to understand its requirements before developing the sensor interface specification. "We recognise the diversity in the sensor industry," said Wietfeldt.

MIPI interfaces

Earlier in 2013, the MIPI Alliance announced the latest versions of two of its interfaces: the low latency interface V2.0 (LLI v2.0) and the camera serial interface (CSI-3) that connects to the camera sensor.

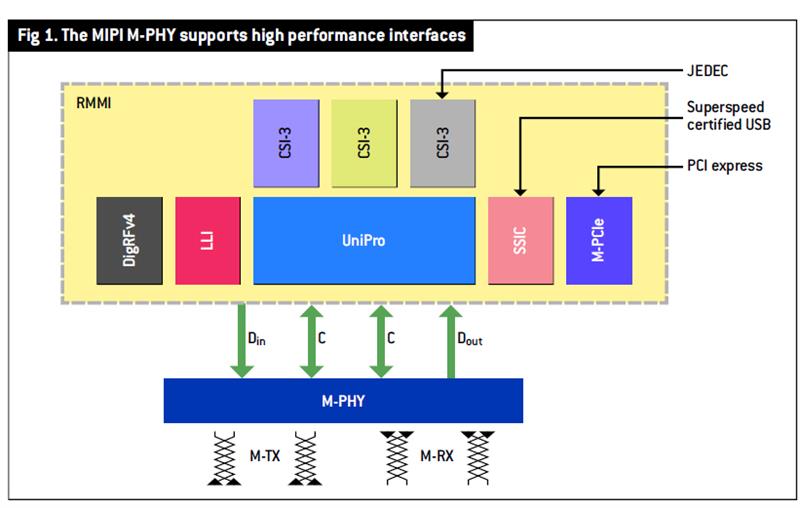

Also announced was M-PHY v3.0, or Gear 3, the latest version of the PHY that operates at the layer below MIPI's protocol specifications. There are three M-PHY specifications: Gear 1, a single lane M-PHY, operates at 1.25 to 1.45Gbit/s; Gear 2 operates from 2.5 to 2.9Gbit/s; while Gear 3 operates at up to 5.8Gbit/s.

The LLI protocol specification was developed to link the application processor and wireless modem chip. To lower system cost, LLI was specified to enable the modem to operate using the application processor's memory. But for the modem chip to access the dram via the application processor requires an interface with a latency of less than 100ns.

LLI v2.0 is backwards compatible with the original LLI, but adds a new frame format to improve the link efficiency as well as power management features. "The whole point of these interface evolutions is that you need to get something to market quickly, so LLI v1.0 had a simpler power management scheme," said Wietfeldt. LLI v2.0 enhances the management capabilities by allowing finer control of power states. "Attributes that you put in the protocol allow you to turn things on and off, on the link," said Wietfeldt.

But LLI v2.0 can also be used to interface to other chips. "There is more and more demand on the terminal's graphics for games and so on," said Huloux. "You can imagine the applications processor being connected to the graphics processor through the LLI."

LLI v2.0 works with all three M-PHYs. The transition from LLI v1.0 to v2.0 results in better use of the M-PHY's capacity for the power consumption, Huloux added.

Another benefit of LLI v2.0 is that it supports an inter processor communication scheme. Here, memory pointer messages are sent across the link such that the device at each end knows where to collect data. Unlike sending data in frames or packets, the LLI protocol tells the relevant device where the data resides and its length, so data can be accessed as required. "It is a more indirect way of sending data," said Wietfeldt.

Thus, LLI v2.0 is a dual mode interface: its low latency removes the need for modem memory, while the inter processor communication scheme enables messages to be exchanged between the modem and the application processor.

The second upgraded MIPI protocol specification, CSI-3 – used to integrate the camera subsystems and bridge devices to the host processor – also uses the M-PHY.

CSI-1 and -2 use the first generation MIPI physical layer device, called D-PHY. This mature design is in wide use and supports 1Gbit/s lanes. CSI-3 differs in that it uses M-PHY – and M-PHY is all about performance in this context, said Wietfeldt.

The growing number of pixels used in handsets' cameras – as an example, Samsung's flagship Galaxy S4 has a 13Mpixel sensor – has led to terminal makers wanting a higher speed interface while keeping the number of pins constant. The bandwidth required by the camera sensor is determined by the pixel count, the bits per pixel – typically 8 to 12bit – and the frame rate – in excess of 30frame/s.

The M-PHY uses a differential pair per lane and, in a typical camera sensor set up, is implemented as an asymmetrical link: four M-PHY lanes are used to take data from the sensor, while one lane runs the other way to allow the sensor's parameters to be programmed on power up. The result is 10 pins overall.

Given that the M-PHY Gear 3 can operate at 5.8Gbit/s, the four available lanes could easily handle the data generated, even by a 20Mpixel sensor, said Wietfeldt.

The M-PHY specification also supports the direct interfacing of an optical media converter. This allows the optical link to extend the reach of a MIPI interface. Huloux cites the example of connecting a tablet to a display 20cm away or interfacing to an external camera.

The CSI-3 interface is also proving of interest to those in the automotive industry, while the MIPI Alliance is also in talks with a surgical camera maker who is keen to use the interface.

Meanwhile, the MIPI Alliance continues to be involved in work aimed at exploiting USB 3.0 and PCI Express 3.0 within mobile devices. The SuperSpeed InterChip (SSIC) interface specification, based on the 5Gbit/s USB 3.0, is now complete and is the follow on to the High Speed InterChip (HSIC) interface that connects ics within mobile devices. HSIC, based on USB 2.0, operates at 480Mbit/s.

And the Mobile-PCIe specification, which can exploit the M-PHY's full 5.8Gbit/s speed, is approaching finalisation (see NE, 22 January 2013).

The MIPI Alliance is also investigating what will be needed to support augmented reality handsets; an application believed to require several MIPI interfaces. "This is a multimedia user interface: camera, display and sensor [are needed] to go with augmented reality," said Huloux.

Another area under investigation is how the MIPI Alliance should support its interface specifications with software, said Peter Lefkin, managing director of the MIPI Alliance. Such software includes drivers and applications. "There have been cases where designers developing devices have had to develop their own software and MIPI could help here," said Huloux.

MIPI will kick off an investigation group this year to look at how to support software residing above its PHYs and protocol specifications. "That is how we evaluate what is next," Lefkin concluded.