In April 2013, when he was US president, Barack Obama launched a research initiative aimed at revolutionising our understanding of the human brain. With a budget of around $100million, the BRAIN – Brain Research through Advancing Innovative Neurotechnologies – Initiative looked to develop and apply technologies that explore how the brain records, processes, uses, stores and retrieves information.

As part of this so called Grand Challenge, the US Defense Advanced Research Projects Agency (DARPA) launched a set of programmes designed to understand the dynamic functions of the brain and to demonstrate breakthrough applications based on these insights.

One element is the Neural Engineering System Design (NESD) programme, announced in January 2016. This aims to develop an implantable neural interface capable of providing ‘unprecedented’ signal resolution and data-transfer bandwidth between the brain and the digital world and to do this in a biocompatible device which has a volume no larger than 1cm3.

One goal of the NESD programme is to develop a ‘deep understanding’ of how the brain processes hearing, speech and vision simultaneously with individual neuron-level precision and at a scale sufficient to represent detailed imagery and sound.

DARPA’s Dr Al Emondi, currently NESD program manager, explained in more detail. “To succeed, NESD requires integrated breakthroughs across disciplines including neuroscience, low-power electronics, photonics, medical device packaging and manufacturing, systems engineering and clinical testing.

“In addition to hardware, NESD performer teams are developing advanced mathematical and neuro-computation techniques to first transcode high-definition sensory information between electronic and cortical neuron representations and then compress and represent those data with minimal loss of fidelity and functionality.”

From the applications, DARPA awarded contracts to five research organisations and one company (see box).

In DARPA’s opinion, NESD involves significant technical challenges, but it believes the teams it has selected not only have feasible plans to deliver coordinated breakthroughs across a range of disciplines, but also to integrate those efforts into end-to-end systems.

But this isn’t a new area of investigation for DARPA. “DARPA has been a pioneer in brain-machine interface technology since the 1970s,” said Justin Sanchez, director of DARPA’s Biological Technologies Office. “We’ve laid the groundwork for a future in which advanced brain interface technologies will transform how people live and work and DARPA will continue to operate at the forward edge of this space.”

Taking advantage of optogenetics

One of the six projects selected by DARPA is led by the Fondation Voir et Entendre (FVE), a Paris based organisation looking to use technology to overcome sensory handicaps linked to vision and hearing.

Its project – called CorticalSight – will use optogenetics techniques to enable better vision for those with poor sight. And there are many who could benefit. Estimates suggest there are almost 300million people suffering from visual impairment. Of these, 40m are blind and 246m have low vision. There are many reasons why people’s sight is impaired. For some, it’s a birth defect, but for others, it’s something that develops as they age – 65% of the visually impaired are believed to be 50 or older.

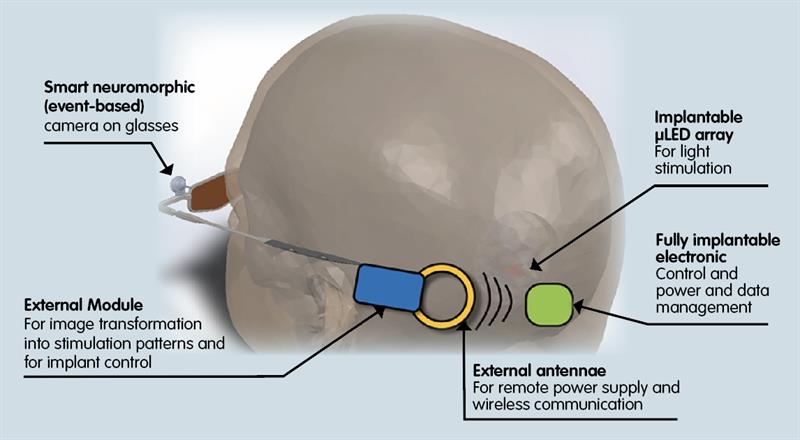

CorticalSight will develop a system that allows communication between a camera-based, high-definition artificial retina worn over the eyes and neurons in the visual cortex. Targeted at those who have lost the eye to brain connection, the project will entail developing a system of implanted electronics and optical technology.

Recognising the complexity of what it’s looking to achieve, CorticalSight has brought in expertise from Paris Vision Institute, Chronocam, Gensight, Stanford University, Inscopix and the Friedrich Miescher Institute. French research centre CEA-Leti will also make a significant contribution, leading the development of the active implantable device that will interface with the visual cortex.

Fabien Sauter-Starace is leading Leti’s part of the project. He said: “The idea is to develop a brain-computer interface that can restore vision by stimulation of the visual cortex. “Accomplishing that needs a lot of knowledge.”

In FVE’s model, information would be captured by a camera, then sent to an external device worn on the head which would transform the images into stimulation patterns. These would then be transmitted wirelessly to a high density microLED stimulator array for the visual cortex.

Optogenetics in this instance will attempt to modify neurons in the visual cortex so they convert specific wavelengths of light into electrical activity. The light captured by a camera will be computed to integrate information processing from the eye to the brain. Images will then be transmitted to the implanted LED stimulator, which then activates neurons.

Sauter-Starace said there are two main options. “You can either stimulate the cortex via electronics, but the more difficult approach is to use optogenetics. Neurons are sensitive to different wavelengths; it’s about balancing chemical and optical resolutions.”

The ‘camera’ in the FVE project is likely to be a prosthetic eye created by French company Chronocam, recently renamed as Prophesee. Sauter-Starace said: “This gives a very good and accurate image. What we’re doing at Leti is working on a system to transfer that image to the brain.”

| Below: The CorticalSight project will use optogenetics techniques to enable better vision for those with poor sight |

In doing so, Leti is building upon existing technology. “There are some ‘technological bricks’ which we can use” Sauter-Starace observed. “Others will be generated or updated; for example, to increase the data rate.”

At the other end of the system, there will be the need for something which can drive a dedicated system of micro optical sources. “We’re using technology originally developed for micro displays,” Sauter-Starace continued. “We’re adapting this to the project’s needs by changing the wavelengths of light generated and reducing power consumption. And we also face thermal constraints.”

Neurograins

The team led by Brown University aims to create what it calls a ‘cortical intranet’. This will comprise tens of thousands of wireless micro-devices – each about the size of a grain of salt – that can be implanted onto or into the cerebral cortex. These implants – neurograins – will operate independently and be capable of interfacing with the brain at the single neuron level. The neurograins will be controlled wirelessly by an electronic patch worn on or beneath the skin.

“What we’re developing is essentially a micro-scale wireless network in the brain, enabling us to communicate directly with neurons on a scale that hasn’t previously been possible,” said Professor Arto Nurmikko.

The project will provide many technical challenges, according to Prof Nurmikko. “We need to make the neurograins small enough to be minimally invasive, but with extraordinary technical sophistication.” The solution, he believes, will require state-of-the-art microscale semiconductor technology. “Additionally, we have the challenge of developing the wireless external hub that can process the signals generated by large populations of spatially distributed neurograins at the same time.”

While the best brain-computer interfaces of the moment work with around 100 neurons, the team wants to start at 1000 neurons and build to 100,000.

“When you increase the number of neurons tenfold, you increase the amount of data you need to manage by much more than that because the brain operates through nested and interconnected circuits,” Prof Nurmikko said. “So this becomes an enormous big data problem for which we’ll need to develop new computational neuroscience tools.”

| DARPA’s six NESD projects DARPA has selected six projects in the first phase of the NESD programme. • Brown University is looking to decode neural processing of speech. Its proposed interface would be composed of networks of up to 100,000 ‘neurograins’. • Columbia University aims to develop a non-penetrating bioelectric interface to the visual cortex using a flexible CMOS integrated circuit containing an electrode array. • Fondation Voir et Entendre aims to apply optogenetics techniques to enable communication between neurons in the visual cortex and a camera-based, high-definition artificial retina worn over the eyes. • John B Pierce Laboratory will develop an interface system in which optogenetically modified neurons communicate with an all-optical prosthesis. • Paradromics aims to use large arrays of penetrating microwire electrodes to create a high data rate cortical interface for recording and stimulation of neurons and to bond these wires to specialised CMOS electronics. • University of California, Berkeley aims to develop a ‘light field’ holographic microscope that can detect and modulate the activity of up to 1million neurons in the cerebral cortex and to use photo-stimulation patterns to replace lost vision or serve as a brain-machine interface. |

Silicon nanoelectronics

At Columbia University, Professor Ken Shepard is looking to develop an implanted brain-interface device that could transform the lives of the hearing and visually impaired.

“If we are successful,” he said, “the tiny size and massive scale of this device could provide the opportunity for transformational interfaces to the brain, including direct interfaces to the visual cortex that would allow patients who have lost their sight to discriminate complex patterns at unprecedented resolutions.”

In Prof Shepard’s opinion, the DARPA project is working to aggressive timescales. “We think the only way to achieve this is to use an all-electrical approach that involves a massive surface-recording array with more than 1million electrodes fabricated as a monolithic device on a single CMOS integrated circuit. We are working with TSMC as our foundry partner.”

The implanted chips will be conformable, very light and flexible enough to move with the tissue. “By using state-of-the-art silicon nanoelectronics and applying it in unusual ways, we are hoping to have big impact on brain-computer interfaces,” Prof Shepard said.

Cortical modem

Researchers in the UC Berkeley team call the device they are developing a cortical modem – a way of ‘reading’ from and ‘writing’ to the brain.

“The ability to talk to the brain has the incredible potential to help compensate for neurological damage caused by degenerative diseases or injury,” said Professor Ehud Isacoff. “By encoding perceptions into the human cortex, you could allow the blind to see or the paralysed to feel touch.”

Reading from and writing to neurons will require a two tiered device, according to the Berkeley team. Its reading device will be a miniaturised microscope mounted on a small window in the skull. Capable of seeing up to 1million neurons at a time, the microscope will be based on the ‘light field camera’, which captures light through an array of lenses and reconstructs images computationally.

For the writing component, the team is looking to project light patterns onto neurons using 3D holograms. This, it contends, will stimulate groups of neurons in a way that reflects normal brain activity.

“We’re not just measuring from a combination of neurons of many, many different types singing different songs,” Prof Isacoff said. “We plan to focus on one subset of neurons that performs a certain kind of function in a certain layer spread out over a large part of the cortex. Reading activity from a larger area of the brain allows us to capture a larger fraction of the visual or tactile field.”

Challenging timeframe

The NESD programme is expected to last four years, with three phases. “The six projects are competing with each other,” Sauter-Starace concluded. “We’re in the first phase of NESD and it maybe that teams will be merged in the future. But, for the moment, we’re in a parallel competition and it’s a challenging timeframe.”

| “The idea, with CorticalSight, is to develop a brain-computer interface that can restore vision by stimulation of the visual cortex.” Fabien Sauter-Starace |